5 Mar 202619 minute read

CI/CD for Context in Agentic Coding: Same Pipeline, Different Rules

5 Mar 202619 minute read

We used to manage code. Now we manage context - the instructions, skills, and agent configuration that steer AI coding agents.

The equivalent of tests for context are evals. An eval defines a scenario - a task you give the agent, the context it runs with, and the expected behavior. You run it, a judge (another model, a script, or a human) checks the output against your criteria, and you get a signal: did the agent do what the context instructed?

At Tessl, we think about this as three levels: review evals are deterministic checks against a specific criterion, pass or fail; task evals put the agent to work with your context in isolation, does it behave correctly given what you've told it; project evals bring the full codebase into scope, does it behave correctly in your actual environment. Each level answers a different question.

Like unit tests and integration tests, you build a suite of these. The natural next step is to wire them into your CI/CD pipeline - run them on every commit, gate on them. That instinct is right. But as you go, you'll find that evals don't behave quite like tests, and the assumptions you carried over from code don't all survive contact with context.

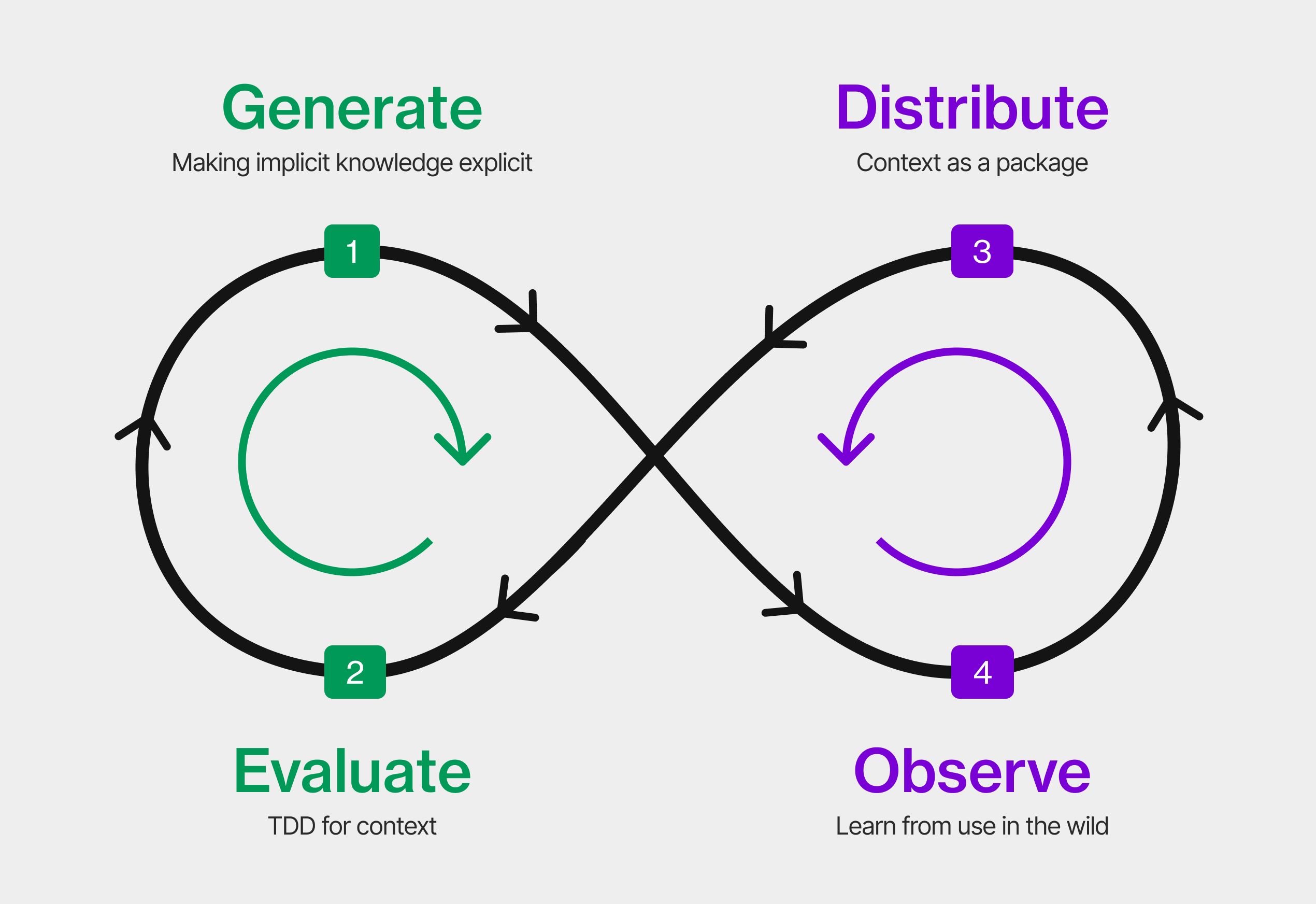

These are the lessons from applying CI/CD thinking to the Evaluate and Observe stages of the Context Development Lifecycle. The problems split into two groups: the first three are about running evals - when, how often, and who decides what passes. The last four are about what you're measuring - and why context behaves differently from code under test.

Part 1: Running evals

Problem 1: Evals aren't deterministic - so you can't gate on them like tests

LLM outputs vary even at temperature 0. Run the same eval twice and you can get different results with nothing changed. If you've dealt with flaky tests in CI, you know what happens next: the team stops trusting the signal, stops investigating failures, and starts merging anyway. Evals have the same failure mode - except the flakiness is structural, not a bug you can fix.

This is why one run tells you almost nothing. SkillsBench recommends five trials minimum per scenario before drawing conclusions. You're not looking for a pass or fail - you're looking for a signal. And not all signals carry the same weight: the acceptable failure rate for a security rule is not the same as for a style convention. Set the budget per eval type, not across the board.

That's the right mental model: error budgets, not pass/fail. Decide in advance what failure rate is acceptable - and make it explicit, because it's a product call, not a technical one. Trending those rates over time is also how the Observe stage of the CDLC pays off - you're not just catching failures, you're building a signal about how your context is holding up.

What to do: Before you wire anything into CI, set your mental error budget: what pass rate is acceptable for this eval? Where you can, prefer binary evals over open-ended ones. Eugene Yan makes the case for this: granular scores sound useful, but teams always end up asking for a recommended threshold so they can report pass/fail anyway. Binary forces the decision boundary early. Did the agent call the right API? Did it modify a file it wasn't supposed to? The answer is yes or no - easy for you to verify, easy for the agent to reason about.

Problem 2: When not all evals pass, who gives the go/no-go?

Before you ask who owns the go/no-go, ask a harder question: has your team actually defined what good looks like? What's an acceptable refactor? What does your error handling convention mean in practice? If you can't answer those questions, you can't write a meaningful eval - and when the agent gets it wrong, "the agent decided" is not an accountability position. You can't delegate judgment you haven't defined yourself.

This is also why eval quality matters as much as context quality. Teams have always struggled to write good tests - too shallow, too brittle, testing the wrong thing. With evals the problem is worse. Non-determinism means a bad eval can pass most of the time and still miss real failures. And because the output is rarely binary, a poorly written eval doesn't just miss problems - it actively misleads you. A shallow eval gives you false confidence: it passes, nothing looks wrong, but the agent is still making mistakes your evals weren't designed to catch. AI can help here - if you have good context, you have the raw material to generate meaningful evals from it. The same context that drives the agent can help generate the tests.

This is where the go/no-go becomes a business conversation, not a technical one. Writing the right evals requires domain understanding - knowing not just what the code should do, but what the team has agreed it should look like. That's rarely just a developer call. It crosses into product, into standards, into organizational decisions about what quality means for your context.

What to do: Start by defining what good looks like - but treat it as an iterative process, not a one-time exercise. Your first evals will be imperfect. Real cases are what sharpen them: every time the agent makes a mistake that slipped through, that's a new eval waiting to be written. Be explicit about which evals can gate automatically and which require a human decision. The more judgment involved, the more explicit you need to be about who owns that judgment - and the less you can rely on the pipeline to decide for you.

Problem 3: Not all evals need to run on every commit

This is where context CI/CD starts to feel familiar. The iteration loop is the same one you already know: while iterating in your dev environment you run a targeted subset - the evals closest to what you're changing, fast feedback as you tune. When you push, the full eval suite runs on the CI server - sandboxed so each run starts clean, no state from previous sessions, no learnings carried over from past runs. If something regresses, fix and repeat. Think of it like a sonar system: a quick pulse first to see what's working, then increasing the reach as you get closer to shipping.

This is where eval-driven context development starts to make sense. Instead of writing context and then figuring out how to test it, you write the eval first - define what good looks like before you start tuning. The eval becomes the spec. If you can't write the eval, you haven't thought clearly enough about what you want the agent to do. It's the same discipline as test-driven development, applied to context.

The suite will grow naturally - every real usage session surfaces edge cases and failure modes that no synthetic eval would have caught upfront. Which is exactly why you need to layer from the start, before it slows your feedback loop down.

What to do: Layer by weight, not by volume. Whether the agent created the right directory structure is a correctness check. Whether it leaked a secret is a safety check. Those need different treatment, different thresholds, different triggers. Non-negotiables run on every PR, lean and fast - narrow beam. The broader suite runs on the CI server before anything merges - wider scan. Treat vibe checking as exploratory testing: you're probing the edges to discover what to formally capture next. A vibe check that doesn't become a scenario is a signal that gets lost.

Part 2: What you're measuring

Problem 4: Observability beats synthetic testing

Synthetic evals are great for fast feedback - they give you a baseline before you've seen any real usage. But the most valuable evals come from real use. Every time you hit an issue while developing - the agent does something unexpected, takes the wrong approach, misses a constraint - that's an eval waiting to be written. A scenario born from a real failure is worth ten you imagined at a whiteboard.

No matter how carefully you design your eval suite upfront, you can't anticipate all the ways your agent will be used. Real codebases have quirks. Teams have conventions no spec fully captures. Edge cases emerge from the combination of your context, your toolchain, and the specific tasks developers actually bring to the agent. Synthetic evals encode what you imagined. Observability captures what actually happens.

Vercel is a good example of turning real failures into an actionable eval suite. Their next-evals-oss repo has specific gaps from real usage: coding agents generating incorrect code for Next.js APIs the models hadn't been trained on yet - things like use cache, connection(), and forbidden(). Instead of leaving that as a known issue, they turned each failure into a self-contained eval with a prompt, a failing test, and a binary verifier: build, lint, test pass or they don't. That's observability in practice - you watch what breaks, you build the test, you wire it into CI.

What to do: Observe what your agent does in real usage - capture the failures and turn them into evals. A failure in real usage is not feedback until it is in your eval suite. Wire your agent to an observability tool - something like Langfuse or similar - so that loop closes automatically. Every session becomes a source of new eval candidates.

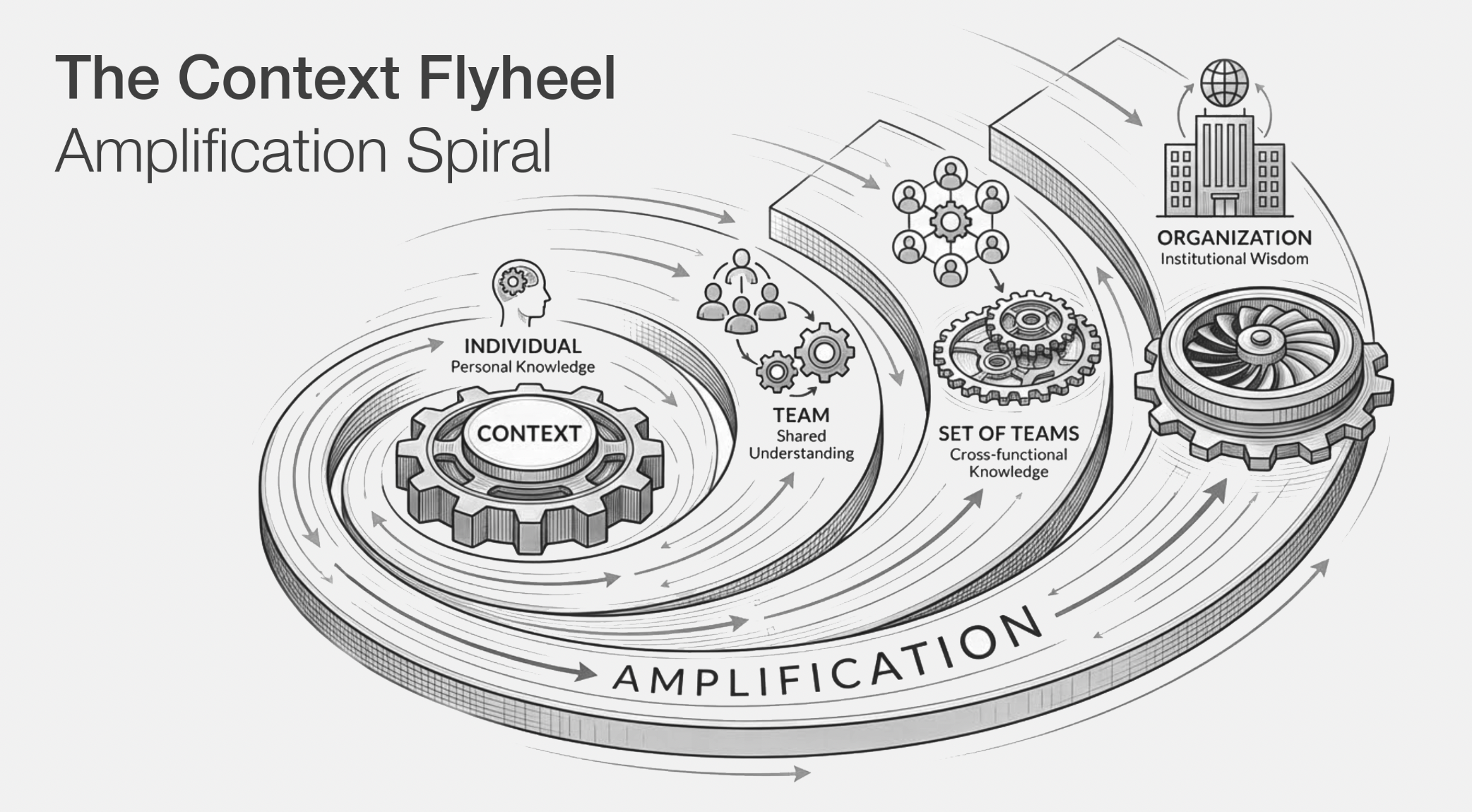

This is where the context flywheel starts to spin. Better signals surface better failures. Better failures produce better evals. Better evals produce better context. Better context produces better agent output - which surfaces better signals.

Problem 5: Context goes stale. So do evals

Tests break when code breaks. Evals can keep passing while your agent quietly drifts - because staleness here has nothing to do with your repo. Two things cause it.

The first is the world changing underneath you. A new coding agent version ships. Instructions your CLAUDE.md relied on to constrain certain behaviors no longer work the same way - the new version interprets them differently, or has learned to handle them without needing those instructions at all. A shared skill your team depends on gets updated upstream. Nothing in your repo moved. Your scenarios still pass. But your agent is steering from stale context. And stale context isn't just unhelpful - instructions written for an older agent version or a workflow that no longer exists can actively confuse the agent, producing worse results than no instruction at all. Unlike a stale test, a stale instruction doesn't fail loudly. It just quietly makes things worse.

The second is instruction changes having side effects. You add a rule to stop the agent modifying test files directly. It works - but it also quietly shifts how the agent handles fixture setup in ways you didn't anticipate. The right question after any context change isn't "did the agent produce good code?" It's more precise: did exactly the intended behavior change, and nothing else? Most teams don't have scenarios targeted enough to catch that distinction.

This is the same problem as dependency drift in regular software - except it's silent in both directions. A package update breaks a test and you know immediately. A coding agent update shifts behavior and your evals keep passing. The signal disappears exactly when you need it most. And it gets circular: your evals are supposed to catch stale context, but if your evals are also stale, the safety net has holes in the safety net. The fix is the same instinct as dependency monitoring - just as Dependabot watches for upstream package changes, you need scheduled eval runs that catch drift from agent updates or shared skill changes, independent of commits.

What to do: Run scheduled evals independent of commits - especially if you're pulling in external context or auto-upgrading agent versions. Write a targeted eval alongside every instruction change that asks not just "does this work" but "did only this change." And use your observability data for periodic AI-assisted eval reviews - ask the agent to compare your eval suite against recent usage patterns and flag what no longer applies.

Problem 6: The whack-a-mole problem

You add a section to your CLAUDE.md on naming conventions. Targeted, specific, harmless. Your evals for naming pass. But three other scenarios that were passing last week are now failing - the agent is handling error messages differently, approaching test file structure in a new way, making different decisions about imports. You didn't touch any of that. You didn't mean to change it. Adding instructions doesn't just add behavior, it changes behavior.

This is structural, not a mistake. LLMs are not rule engines. You can't add one instruction and expect only that behavior to change. The model is a black box - you put context in, behavior comes out, and the relationship between the two is never fully predictable. Every instruction you add shifts the whole.

This is why no eval suite can ever be complete. You can write scenarios for every instruction you have and still miss what happens when they interact. Every fix is a potential new whack - you fix one thing, something adjacent moves. As Hamel Husain argues, the right mental model is evals as a monitoring layer, not a coverage target.

What to do: When you make a context change, rerun your full eval suite before merging. Unexpected failures elsewhere are the signal. Keep a change log - what changed, what broke, what you adjusted. It tells you which instructions are “hotspots” - where concerns overlap and a small change in one place ripples into another.

Problem 7: Your context, your evals

Vendors will sell you ready-made metrics - helpfulness scores, coherence ratings, quality indexes. These might make sense for general LLM applications, but for coding agents they measure the wrong thing entirely. Did the agent write fluent code? Irrelevant. Did it create the right file structure, follow your conventions, avoid touching files it shouldn't have? Those questions need evals you define yourself, not off-the-shelf scores. A generic metric applied to a coding agent is measuring the surface of the output, not the behavior that matters.

Tools like Harbor Bench make it easy to run evals - bring your own tasks, define your own criteria, wire up a judge. It handles the execution well. But it doesn't help you find the right scenarios in the first place. That definition work is the hard part: Tessl's eval generation can help bootstrap it, turning your existing context into a starting set of scenarios rather than starting from a blank page - and skill optimization can help you improve your context against those scenarios once you have them.

Once an eval score gates deploys, someone will figure out how to make the score go up. Not fraud - rational behavior in response to a metric that became a target. Goodhart's Law applied to context: when a measure becomes a target, it ceases to be a good measure. And when people optimize for the wrong target, the real failures stop surfacing. Nobody files a bug against a score that's trending up. This is why it matters to make it easy to report real issues - not just through eval dashboards, but through the actual development workflow. A developer who can flag a failure in one click is more valuable than a hundred theoretical scenarios. The easier it is to report reality, the less the score can drift from it.

What to do: Don't start with infrastructure. Start with defining what good looks like for your specific context. Add new scenarios from real failures as they surface. Once you have scenarios worth protecting, build holdout sets for periodic reality checks.

The score is a proxy. Your context is the thing. Generic metrics measure someone else's definition of good. The only evals worth optimizing for are the ones you defined yourself - from your codebase, your conventions, your team's standards.