Context is what drives your coding agents. Yet most of the tools teams reach for are optimized for one thing: generating code. Getting the most out of your agents depends on three dimensions maturing together:

- Agents and Tools: are yours context-aware, or just code generators?

- Context: are you managing it, or just hoping it works?

- People and Organization: who owns making context flow across your team?

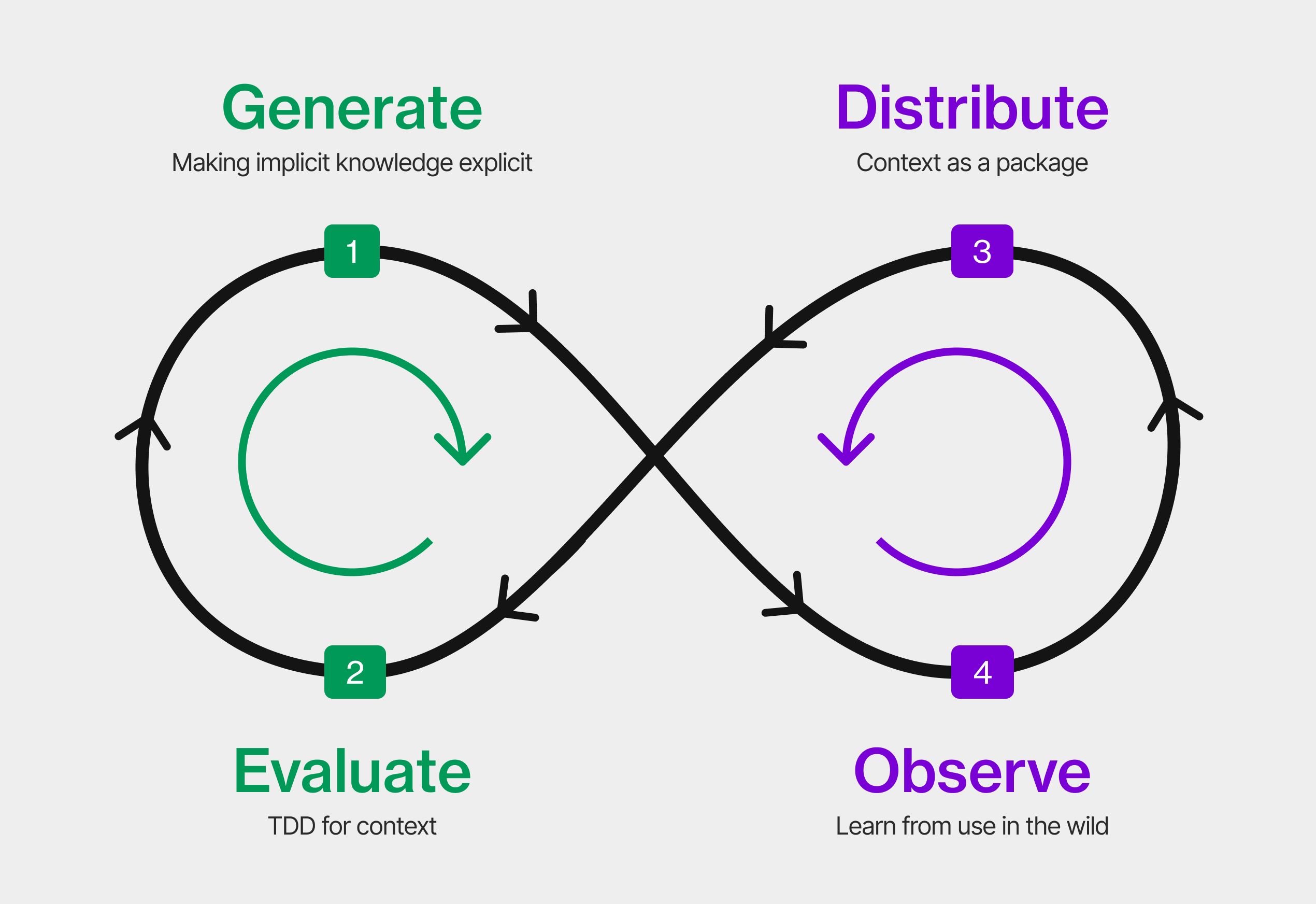

Each dimension follows the same maturity path: from manual and ad-hoc to automated and self-improving. That path maps to the Context Development Lifecycle: Generate, Distribute, Test, Observe. Here's what that looks like across all three dimensions.

Agents and Tools

Coding agents are just the entry point. Toolchain maturity is about how context-aware your tools are, not just how much code they generate.

| Stage | Manual | Repeatable | Automated | Self-improving |

|---|---|---|---|---|

| Generate | Single coding agent, prompt in, code out | Coding agent consumes a rules file or CLAUDE.md | Multiple tools generate context from code, PRs and conversations | Tools across the whole workflow contribute to context generation |

| Distribute | Context lives in the coding agent session only | Coding agent picks up shared context from repo | Context registry feeds multiple tools automatically | All tools pull from and contribute to a shared context layer |

| Test | Developer checks if generated code looks right | PR reviews explicitly check context compliance, not just code quality | CI pipeline includes context evals alongside code tests | All tools report on context quality, gaps surface automatically |

| Observe | Developer notices tool getting something wrong | Team shares observations from coding agent sessions, patterns emerge | Multiple tools report on context usage and gaps | Full toolchain feeds observability data back into context generation |

Context

Nobody liked writing documentation. But everyone is writing context for their AI agents, because unlike docs, it makes them more productive right away. The question is how systematic you are about producing it, testing it, and distributing it.

| Stage | Manual | Repeatable | Automated | Self-improving |

|---|---|---|---|---|

| Generate | Prompt pasted in session | Rules file in repo, maintained by hand | Context generated from code, libraries, conversations and pull requests | Agents draft context, humans review |

| Distribute | Shared on Slack when someone asks | Known shared location, manually kept current | Registry with versioning and format adapters | Updates propagate automatically, usage drives promotion |

| Test | Vibe check, looks right | Team checklist for reviewing output | Evals run in CI, scores tracked over time | Eval suite evolves from observed failure patterns |

| Observe | One dev notices something wrong in their session and corrects it | Observations captured and shared across the team, multiple users contributing signal | Logs and hooks feed a central view, humans review patterns and act | Gaps auto-detected and fed back into generation without human initiation |

People and Organization

Context doesn't flow on its own. Someone has to make it happen, and as maturity grows, that responsibility moves from individuals to managers to leaders.

| Stage | Manual | Repeatable | Automated | Self-improving |

|---|---|---|---|---|

| Generate | Developer creates context for their own use | Team lead encourages sharing, team writes conventions together | Managers create systems to capture context from code, PRs and conversations | Leadership creates conditions where context generation is everyone's default |

| Distribute | Developer keeps context to themselves or shares ad-hoc | Team lead ensures context is in a shared location | Managers set up cross-team registries and publishing workflows | Leadership treats context like a product, versioned, maintained, depended on |

| Test | Developer eyeballs their own output | Team lead sets review criteria for the team | Managers run evals across teams, make quality a shared standard | Leadership makes context quality a strategic priority with org-wide gates |

| Observe | Developer notices friction in their own session | Team lead collects feedback across the team | Managers track cross-team patterns, act on signals | Leadership uses agent performance data to drive org-wide context strategy |

Signals of friction

The three dimensions don't mature in isolation. They create leverage or drag on each other. Most teams experience this not as a framework problem but as friction. Here's what that looks like at each stage.

Generate

"Output is inconsistent across the team."

Knowledge lives in people's heads but never gets extracted into shared artifacts. The next step: create the habit and tooling to capture what people know, from conversations, code reviews, and daily work, and turn it into context agents can use.

Distribute

"We keep rewriting the same context because nobody owns it."

There's no easy mechanism for people to share and build on each other's context. The next step: build the infrastructure that makes sharing the path of least resistance, with a registry, clear ownership, and workflows that make reuse easier than reinvention.

Test

"We shared our rules across teams and things got worse."

"We're running agents in parallel but spending all our time on code review."

Distributing context without a way to review and validate it amplifies problems at scale. The next step: make it easy to capture signals, review context quality, and validate before distributing widely.

Observe

"We have context but we don't know if it's actually working."

Context was written without real usage data behind it. Synthetic evals only go so far. What matters is what actually happens when agents use your context in production. The next step: instrument observation from real usage so you know what to fix and what to generate next.

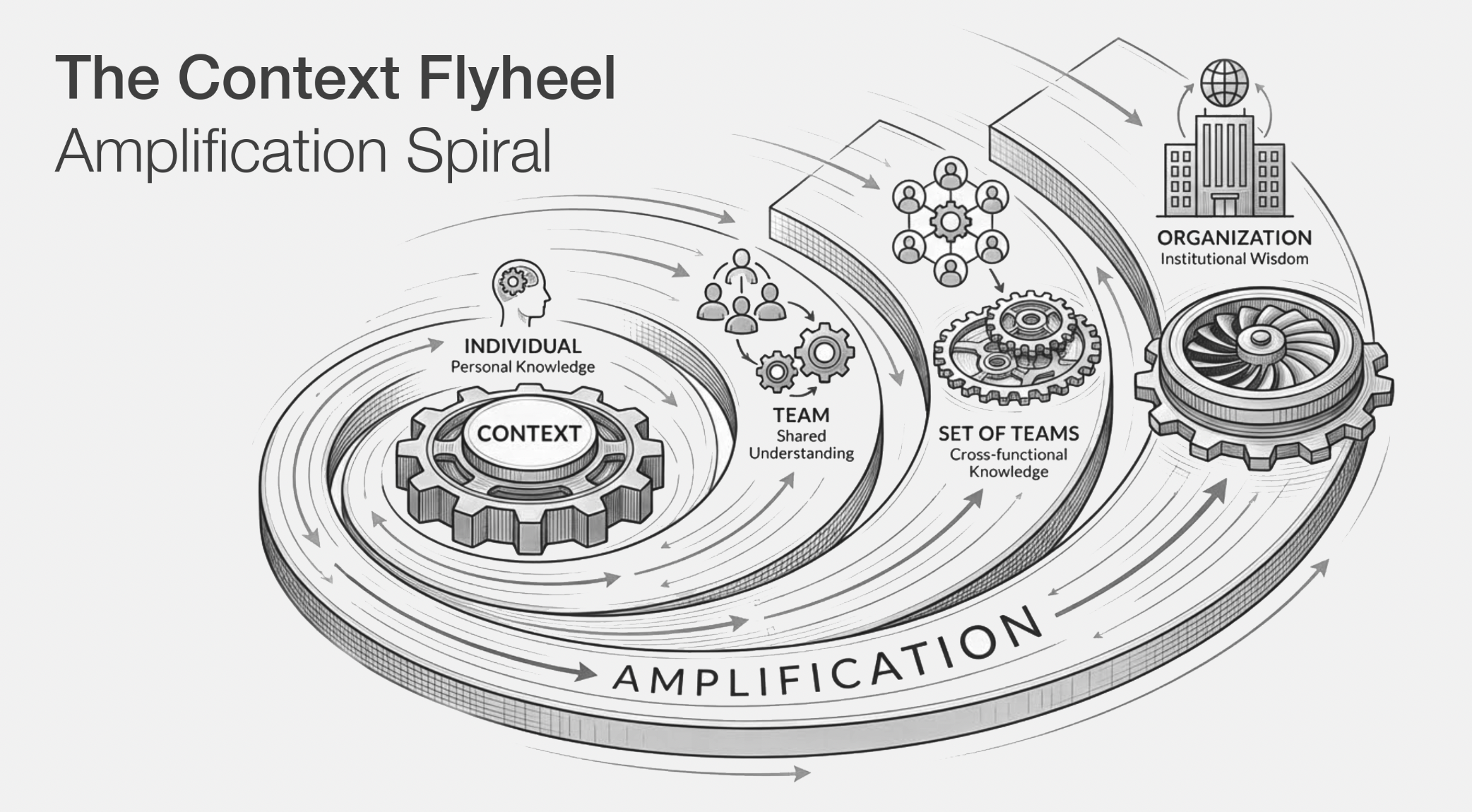

The flywheel

When all three dimensions advance together, context leading, agents enabling, org adapting, the CDLC starts spinning as a context flywheel, a continuous improvement loop. Observation feeds generation. Testing catches regressions. Distribution scales what works.

Where to go from here

**Start today.** Take stock of what context your team has already created. Where does it live? Who owns it? Is it shared? That audit alone will tell you where you are and where to go next.