There is considerable discussion in the design community about what it means to become ‘AI-native’. Much of that conversation revolves around productivity, including faster workflows, quicker drafts, higher output, and fewer manual steps. Those gains are real and genuinely useful.

Yet there is another, quieter dimension that receives less attention.

Sometimes AI does not simply accelerate our work. Sometimes it exposes the gap between what users expect and what our product actually does. Occasionally, an AI agent hallucination fix becomes less about correcting the model and more about tightening the system around it.

The Moment: An Installation Command That Did Not Exist

In the run-up to our Task Eval launch, a system for measuring whether agent skills actually perform as expected over time, I was running dry runs of our user journeys. Rather than following the idealised path we have carefully designed, we try to simulate the messy, practical queries someone might attempt at 10pm when they just want something to work. No context, no patience, and often no intention of reading documentation.

One question crossed my mind: what would someone see if they Googled ‘how to install Tessl’?

The result surprised me.

Google’s AI Overview confidently presented two installation methods: one via npm and another via curl.

The problem? We did not officially support a curl installer at the time. If someone had copied that command into their terminal, they would have encountered friction immediately. Not a subtle edge case or an obscure error, but a broken first experience at the precise moment of intent.

The AI was hallucinating, and yet the hallucination felt entirely plausible. That was the unsettling part. It was not suggesting something bizarre or unrelated. It was suggesting something we arguably should have had.

Why This Matters?

At first glance, this looks like a minor developer experience issue. A missing install pathway. An easily dismissed inconsistency.

But installation reliability is not peripheral. It is foundational.

When the first command a developer runs fails, trust drops quickly. Friction accumulates. Even if the core system is robust, the experience has already introduced doubt. Coding agent reliability does not begin at evaluation time. It begins at installation.

The AI agent hallucination was not just incorrect output. It surfaced a gap between ecosystem expectations and our implementation. That gap is where trust quietly erodes.

The Reframing

Once we recognised the implications, the conversation could have stalled there. It would have been easy to treat the hallucination as an external problem, not ours. AI systems get things wrong. Users should check the documentation. All entirely reasonable responses.

But when I shared the screenshot internally, one comment from Patrick, one of our DevRels, shifted the tone of the conversation. He wrote:

Hallucinations are the logical features a product should have 🙂

That comment lingered.

Instead of dismissing the output as incorrect, we treated it as a signal. Why did the AI assume we supported curl? Because most modern CLI tools do. It was not inventing randomly. It had extrapolated from patterns across the ecosystem.

In other words, the hallucination reflected a reasonable user expectation. The uncomfortable question was not whether the model was wrong, but whether we were slightly behind the curve.

From signal to shipping

Once framed that way, the discussion changed. Instead of debating the accuracy of the AI output, we began asking whether the expectation it surfaced was valid. curl support was already on the roadmap in a rough, unofficial form. The idea was not foreign to us; it simply had not been prioritised.

The internal thread gathered momentum quickly.

curl is not officially supported yet, but it is live

– Shaun

Would a redirect still work? If so I could make this work quickly…

– Richard

If somebody runs that command because of Google, it is probably better to get Tessl than a 404 🙂

– Ernesto

The conversation has been slightly compressed for storytelling purposes, but the spirit is accurate.

The conversation moved from analysis to action with very little friction. There was no blame, no dramatic post-mortem. Just a shared recognition that if this is what developers expect, we should probably meet that expectation. The tone was pragmatic, slightly amused, and quietly determined.

Armed with conviction and a healthy amount of caffeine, Shaun tightened the static binaries, formalised the installer script, and aligned the surrounding infrastructure. What had been a half-finished capability became a supported pathway. Within a day, the command that had previously been fiction was real: curl -fsSL https://get.tessl.io | sh. The instructions in the AI Overview were no longer hallucinated. They were accurate.

There was something oddly satisfying about that. A speculative guess generated by a model had become a legitimate feature, not because we were chasing AI output, but because the output exposed a sensible gap.

What began as a minor scare became a small but meaningful product improvement. It aligned us more closely with prevailing developer norms and reduced friction at the moment of discovery. It also unlocked adjacent benefits, including smoother npx usage and improvements in packaging that contributed to performance gains. The change was not dramatic in isolation, but it strengthened the first-run experience in ways that documentation alone could not.

A tool that works perfectly after installation still fails if installation breaks.

The Broader Lesson: AI Agent Hallucinations Can Reveal Reliability Gaps

There is a quiet irony here.

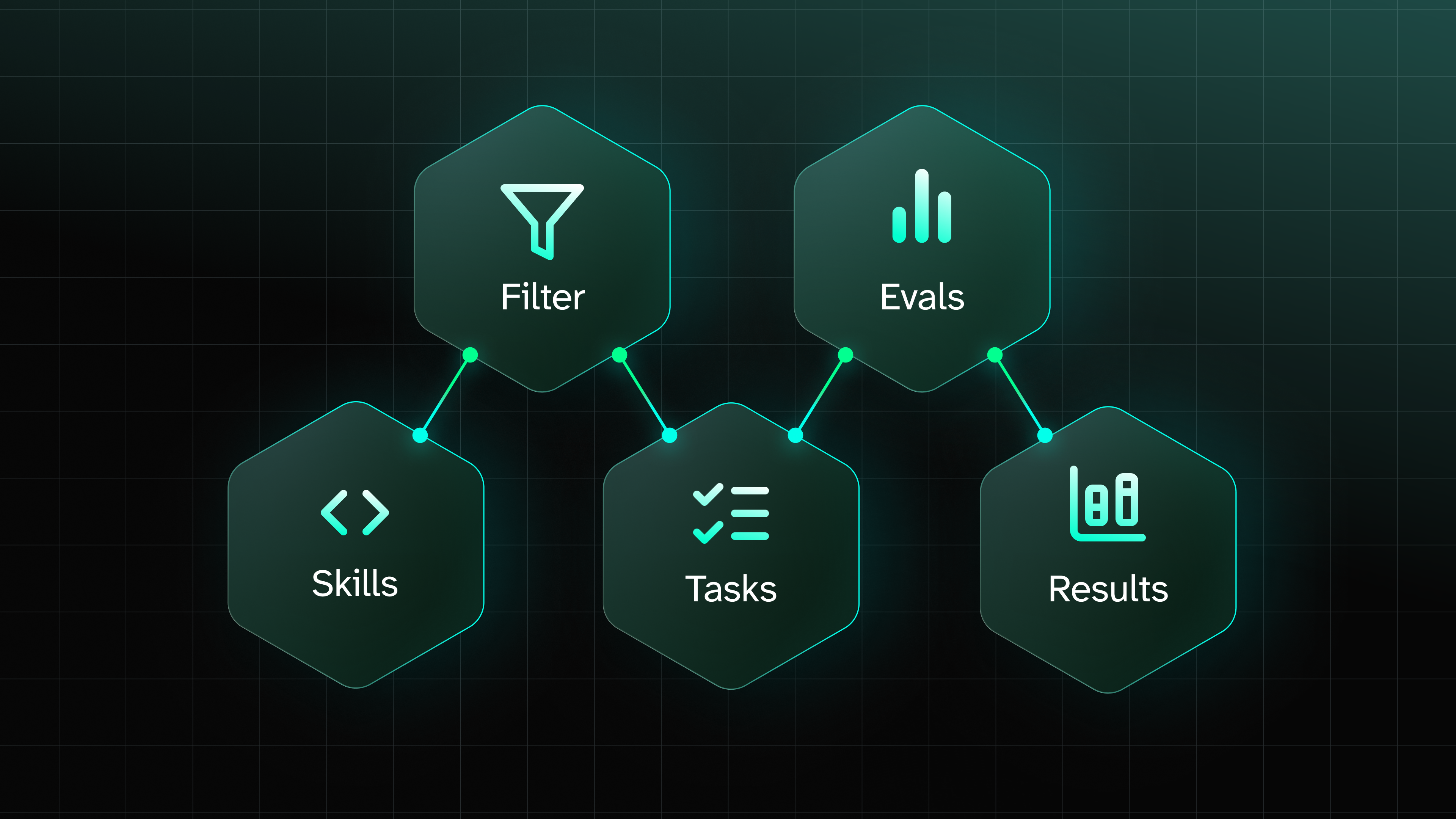

We were launching Task Evals, a system designed to measure whether agent skills actually work over time. At the same moment, an AI system surfaced a gap in our own experience. It was a gentle reminder that evaluation runs in both directions.

AI does not only generate outputs. It encodes assumptions about how modern tools should behave. When those assumptions diverge from your product, that divergence is informative. It tells you something about the standards your ecosystem has normalised.

Not every hallucination deserves to be shipped. Many are genuinely incorrect or infeasible. Blindly following generated suggestions would be irresponsible. Yet occasionally, a hallucination is not noise but a mirror. It reflects the standards your users already assume you meet.

In this case, listening to that signal strengthened both the product and the team. It was not a story about capitulating to an AI mistake. It was a story about engineers and product thinkers recognising a reasonable expectation, collaborating quickly, and turning an awkward surprise into a better experience. Sometimes the shortest path to clarity begins with something that should not have worked, and the willingness to treat it as a prompt rather than a problem.

Try it yourself

You can now install Tessl directly via curl -fsSL https://get.tessl.io| sh

Browse the Tessl Registry to explore evaluated skills and documentation tiles, many with built-in evals so you can measure agent adherence before trusting it with your codebase.

Resources

Related Articles

More by Hamza Oza

Anthropic adds 'routines' to Claude Code for scheduled agent tasks

16 Apr 2026

Paul Sawers

A Proposed Framework For Evaluating Skills [Research Eng Blog]

15 Apr 2026

Maksim Shaposhnikov

Vercel open-sources Open Agents to help companies build their own AI coding agents

15 Apr 2026

Paul Sawers

The infrastructure gap: what we heard at AI Engineer Europe

14 Apr 2026

Jordan Sanders

GitHub brings remote control to Copilot CLI as coding agents move beyond the terminal

14 Apr 2026

Paul Sawers