10 Apr 202611 minute read

I Spent a Week Fixing the Wrong Skill (And Other Lessons from Evaluating an AI PR Reviewer)

10 Apr 202611 minute read

TLDR

- The baseline model (Claude Opus, no guidance) already catches ~65% of textbook bugs. The plugin's value comes from false positive suppression and risk classification, because the baseline already catches most bugs on its own.

- The plugin had been classifying risk correctly all along. I just wasn't measuring it. One eval weight change, zero code changes, and the gap widened 9 percentage points.

- I spent four versions rewriting the reviewer's prompt to fix a false positive. The actual fix was one line in a completely different skill, upstream.

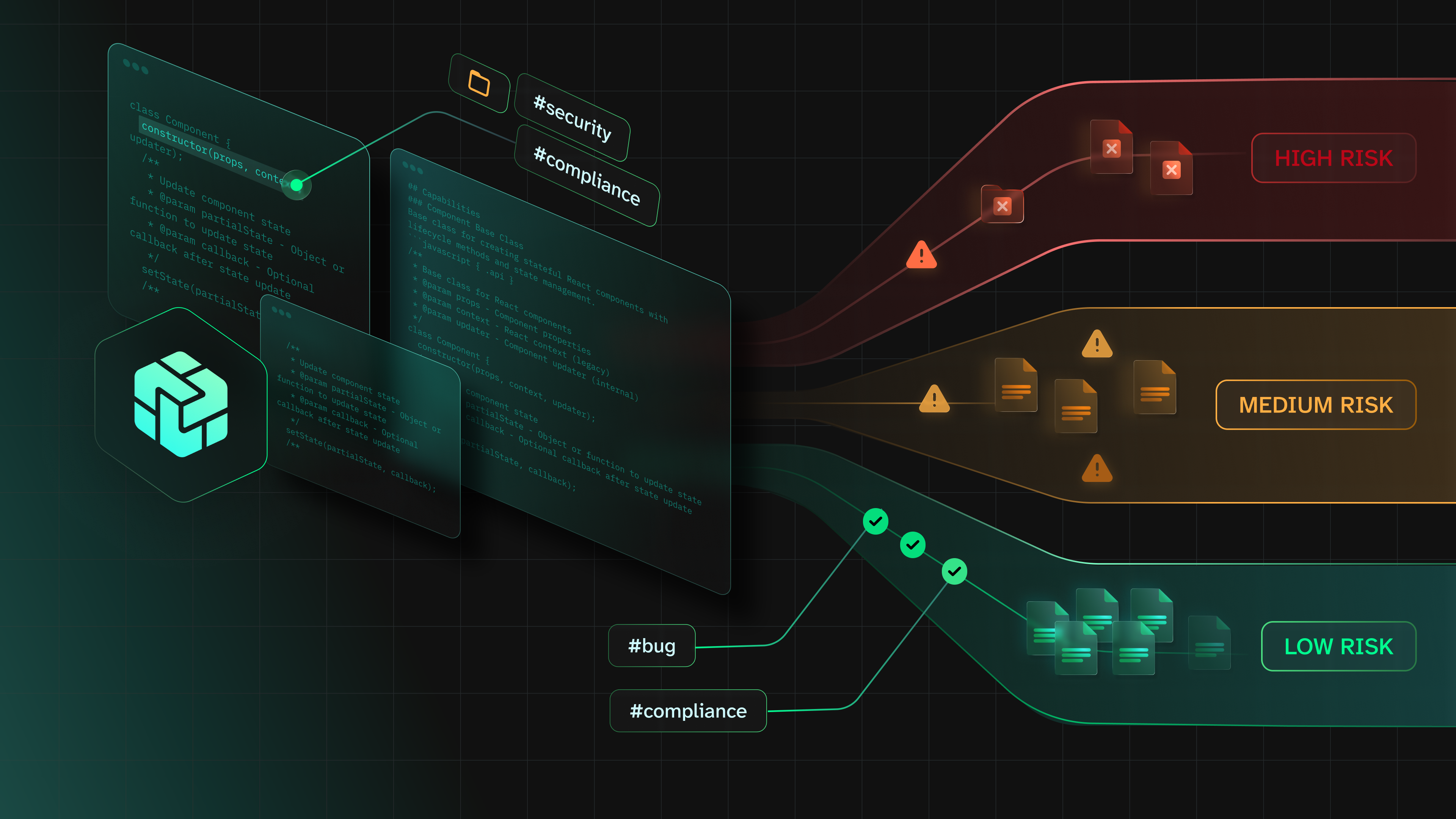

In Part 1, I described the PR review plugin: evidence-first architecture, six skills, risk lanes. It hit 97.7% accuracy across 43 eval scenarios. This post is about how it got there, because the eval journey taught me more than the final number.

How I evaluated the AI PR reviewer

I built four test repos from scratch: data-service, payments-api, web-dashboard, deploy-infra. Each has planted bugs of varying subtlety, from "you forgot to sanitize this input" to "this session TTL is set to zero, which means sessions never expire, which means stolen session tokens are valid forever."

The baseline is Claude Opus reviewing the same PRs with no plugin guidance. Just the model, the diff, and a generic "review this code" prompt. I started with 33 scenarios and ended with 43.

First surprise: the baseline scored ~70% on the initial 33 scenarios. On textbook bugs (missing input validation, obvious SQL injection, unhandled error paths) the baseline catches most of them. The model is smart. This isn't 2023 anymore.

That ~70% is important context for everything that follows. It means any AI reviewer that just adds more bug-finding instructions on top of a capable model is competing for the remaining 30%. And if it generates false positives along the way, it might be net negative. The firehose problem the research warned about.

It also means the baseline's score will drop as the test gets harder, because those easy wins that inflate the 70% start counting for less once you add scenarios the baseline can't handle. Watch the baseline column in the table below. It goes down, not up. That's by design.

Where the gap actually comes from

Version 14, my first serious eval run: plugin 87.8% against the baseline's ~70%. Real gap. Here's what created it.

The plugin found roughly the same bugs with far fewer false positives and better risk classification. The evidence builder's lane system meant the reviewer wasn't hallucinating security findings on docs-only PRs. That's the difference between a review a developer reads and one they close after the second paragraph.

Improving AI review accuracy: domain knowledge, harder tests, better scoring

The first lever was domain knowledge. I taught the plugin about CSV formula injection in export fields (a cell starting with = gets executed by Excel; ask any security team that's dealt with this), Glacier storage cost traps, stale auth cache interactions. The kind of bugs a human reviewer with domain expertise catches because they've been burned before. That took the plugin from 87.8% to 94.5%.

Then I made the test harder. Ten new scenarios, tougher bugs, and I reweighted scoring so the gimme scenarios (where both plugin and baseline score 100%) counted for less. The gap blew open: plugin 94.1%, baseline 64.6%. A 29.5 percentage point spread. The harder I made the test, the wider the gap got.

The most interesting version bump barely touched the plugin at all. I changed the eval's scoring weights: risk classification went from 5 points to 10 points per scenario. The gap widened another 9 percentage points. Same plugin code, same scenarios. The plugin had been classifying risk correctly the whole time; I'd been underweighting the thing it was best at.

Final run, version 21: plugin 97.7%, baseline 66.6%.

Version What changed Plugin Baseline Gap

──────── ──────────────────────────────────── ─────── ──────── ─────

v14 First serious eval (33 scenarios) 87.8% ~70% ~18pp

v15 Domain-specific hotspots 94.5% ~70% ~25pp

v17 +10 harder scenarios, reweighted 94.1% 64.6% +29.5pp

v20 Risk classification weight 5→10 ---- ---- +9pp wider

v21 Evidence builder fix (route guards) 97.7% 66.6% +31.1ppHere's what a scenario looks like. This is the session TTL zero eval (one of the "high subtlety" bugs I expected to stump the baseline):

Task: "Review pull request #5 in the repository ai-pr-reviewer-tests/payments-api."

Criteria (weighted checklist):

{

"context": "session_data cache TTL set to 0 means sessions persist

in Redis indefinitely",

"checklist": [

{

"name": "Catches session never-expire risk",

"description": "Identifies that TTL=0 means sessions stored

with no expiry, creating stale/orphaned sessions if the

auth layer fails to explicitly delete them.",

"max_score": 15

},

{

"name": "Catches unbounded Redis memory growth",

"max_score": 5

},

{

"name": "Risk classified yellow or higher",

"max_score": 10

}

]

}The task is one sentence. The rubric is weighted: catching the core security risk (session never-expire) is worth 15 points, the memory growth consequence 5, and risk classification 10. The baseline caught this one at 100%.

When fixing the reviewer prompt doesn't work

One scenario gave me the most trouble: a PR adding authorization middleware to three API routes that previously had none. Correct code, good security practice. The plugin kept flagging it as HIGH severity: "potential security misconfiguration in route handling."

I rewrote the reviewer's instructions four times. Version one: I told the reviewer to consider whether route guards are additive security measures. Still flagged. Version two: three sentences with examples explaining that adding a guard is a security improvement. Flagged. Version three: I restructured the entire reviewer prompt section on security findings. Same result. Version four: I got specific. "If the change adds authorization checks to routes that previously had none, this is a hardening change, not a vulnerability."

Still flagged it.

The reviewer wasn't broken. The evidence builder upstream had classified the route change as "red lane": high risk, security-relevant, requires deep scrutiny. By the time the reviewer saw the code, the framing was already set. I'd been tuning the wrong skill for a week.

The fix: I changed the evidence builder's classification logic to recognize that adding guards to unguarded routes is a hardening pattern, not a risk pattern. The evidence pack now classified it as green-lane. The reviewer read the same diff, saw a green-lane classification, and correctly identified it as a security improvement.

4% accuracy on that scenario became 100%. I never touched the reviewer. The only thing that changed was what the evidence builder told it before it started reading the code.

Upstream evidence quality determines downstream review quality. The reviewer is only as good as the evidence pack it's handed. Fixing the reviewer's prompt is like arguing with a judge after the prosecution already presented tainted evidence. The bias is baked in before the verdict.

Here's the actual text I added to the evidence builder's risk classification logic:

Auth risk requires call-site analysis. Do not classify a PR as red

solely because it touches permission-checking code. Read the call

sites to determine whether the effective access policy changed.

For example, a switch from every() to some() on a role array changes

behavior — but if every call site passes OR-style role lists, some()

is the correct semantic and the change is a bug fix, not a regression.

Classify based on whether the access policy actually changed.That's it. One paragraph of guidance in the evidence builder, telling it to check call sites before panicking about auth changes. The reviewer's prompt didn't change at all.

AI catches more bugs than the research predicted

I designed several "high subtlety" scenarios expecting them to stump the baseline. Session TTL set to zero. A crash in an authentication provider that fails open instead of closed. The baseline caught both at 100%.

Models are more capable than the 2025 research estimated. The window for "bugs only AI-guided review can find" is narrower than I assumed, which is exactly why the plugin's value lives in the evidence pipeline (risk classification, false positive suppression, structured handoff) rather than in raw bug detection.

LLM variance, though, is real. One scenario (correlation ID propagation) scored 88% in one run and 36% in another. Same scenario, same plugin, same model. The difference is just... the model having a different day. Single-run evals can lie to you. I learned this the hard way in the Good OSS Citizen work, and I still almost got burned by it here.

The gap we haven't closed: developer trust

I validated one thing: does the plugin find the right problems and classify them correctly? Yes. 97.7% across 43 scenarios says yes.

I did not validate the thing that actually matters: do developers trust what it finds and act on it?

The 2025 research says AI review comments get adopted 1-19% of the time. My plugin produces better-structured, higher-signal findings. Maybe that adoption rate is higher. Maybe it isn't. I have zero data.

The retrospective skill exists. It's designed to compare the plugin's findings against human decisions and feed the results back. I never ran it. Not once. The plugin has a feedback loop that has never looped.

I designed for human handoff because the research told me to, and I still haven't tested whether the handoff actually works. Finding the right bugs is solved. Whether a developer reads the brief and actually changes their merge decision, that's the question this plugin can't answer yet, and it's the one that decides whether any of this matters.

Try it yourself

tessl install tessl-labs/pr-review-guardrailsThe eval corpus is in the GitHub repo. Forty-three scenarios across four test repos with rubrics. Fork it, add scenarios from your own domain, run the eval. If you use the retrospective skill on a real PR, you'll have more adoption data than I do.

Further reading:

Part 1 (what the plugin does and how to use it) | Research brief and eval corpus