26 Feb 20267 minute read

The Context Flywheel: Why the Best AI Coding Teams Will Win on Context

26 Feb 20267 minute read

Why running the Context Development Lifecycle over time produces a compounding advantage your competitors can't copy.

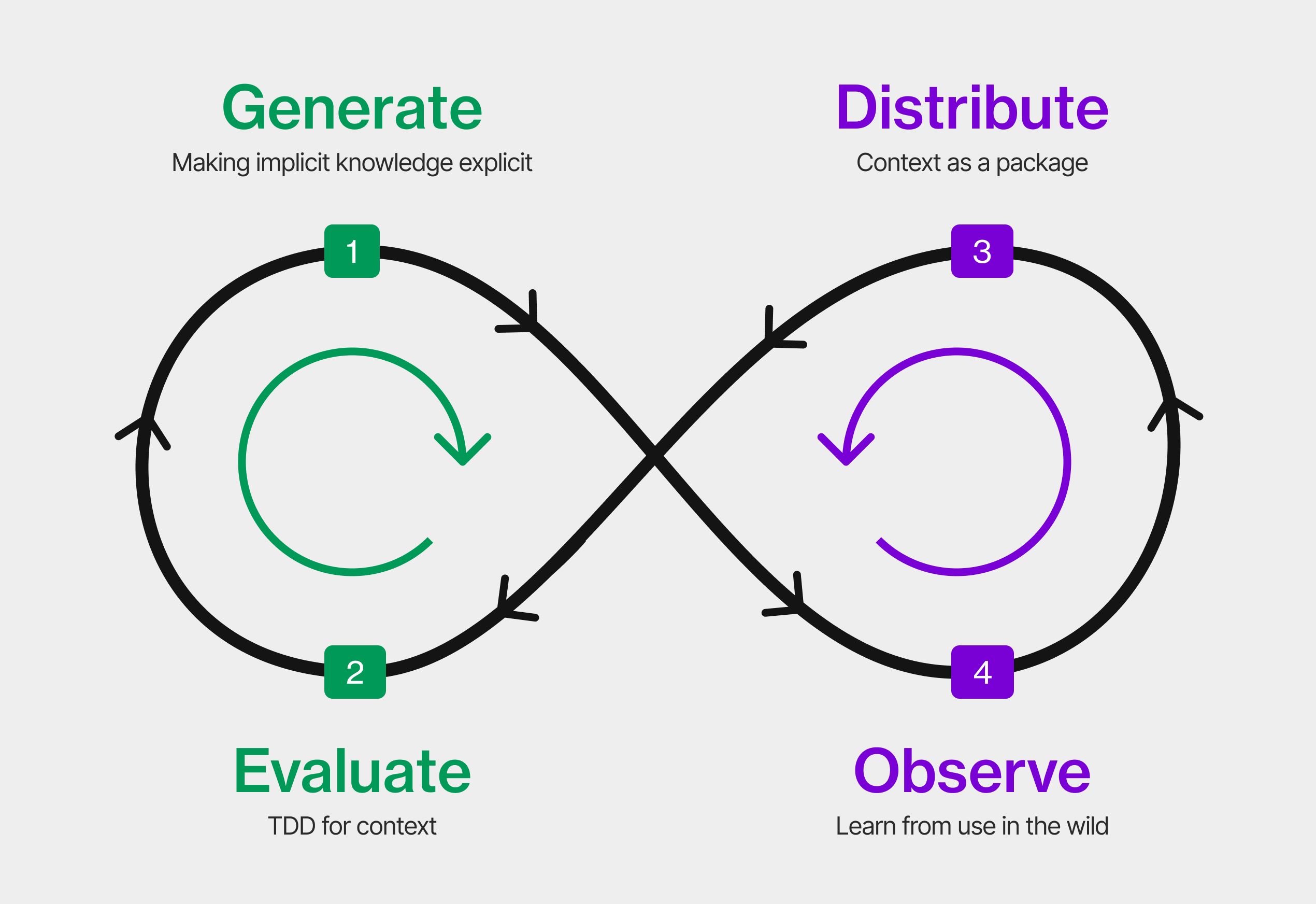

In the previous piece, I described what I think is an emerging Context Development Lifecycle (CDLC): Generate, Evaluate, Distribute, Observe. The four stages that treat context as an engineering artifact rather than a markdown file someone wrote once and forgot about.

But the lifecycle isn't the point. The point is what happens when you run it over time.

One loop is useful. Many loops are transformative.

The first time through the cycle, you capture some coding conventions, test them against what your agents actually produce, share them with the team, and notice where the agents still get it wrong. That's already better than what most teams do today.

The second time, you've fixed those gaps. The agent output improves. New, subtler gaps become visible. You fix those too.

By the tenth cycle, something has shifted. The agents aren't just following instructions better. Your entire team is coding differently: faster, more consistent, with fewer corrections.

This is the context flywheel.

Better context produces better agent output. Better agent output generates better signals. Better signals produce better context. Developer, agent, team, and organisation all improve in the same loop.

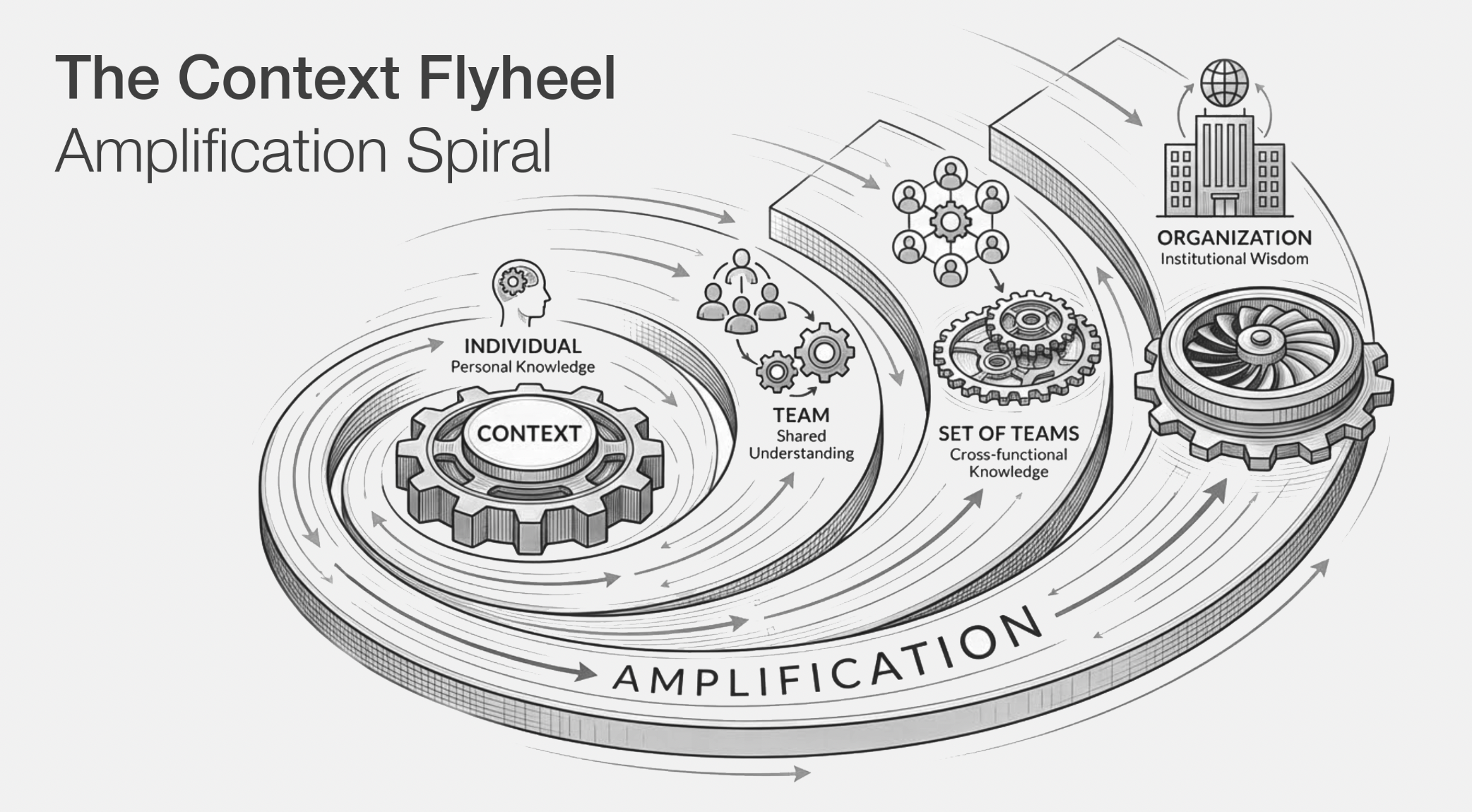

One investment, four returns

Most engineering investments have a single return. You write tests, you catch bugs. You set up CI, you ship faster. Context is different because it compounds in four directions simultaneously.

When a senior engineer encodes their expertise as a tested, versioned skill:

First return: agent quality. Every coding agent that encounters that domain handles it correctly going forward. The context does the teaching you used to do manually.

Second return: deeper expertise. The senior engineer has clarified their own understanding by making it explicit. Articulating knowledge you previously carried as instinct changes how you think about it.

Third return: team learning. Every junior developer can read the skill and learn the expected patterns, the constraints, the reasoning behind the decisions. The context becomes a teaching artifact.

Fourth return: organisational alignment. Over repeated cycles, developers and agents converge on shared terminology. Because agents interpret terms literally, precision in naming directly translates to precision in code. And because context is shared across the organisation, that terminology crosses boundaries. Alignment happens as a side effect.

One investment. Four returns. And each feeds back into the cycle.

The moat is context, not tools

Over time, the flywheel produces something that's hard to replicate: structured organisational context. Not a wiki that nobody reads. Not documentation that's perpetually out of date. Living, tested, versioned context that coding agents actively consume and that humans actively maintain. Knowledge that shows up directly in the quality and consistency of every line of code your agents produce.

An organisation that runs this flywheel for two years won't outpace competitors simply by building software faster : a lean startup with a greenfield codebase and no legacy debt can move just as quickly, regardless of which models or tools either side adopts.

The real moat is accumulated product knowledge: the edge cases catalogued, the user needs mapped, the domain reasoning encoded and made available to agents. Models are commoditising. Tools are converging. But when your agents draw on two years of continuously refined understanding of what your users actually need, they make better product decisions and handle the full depth of problems that a new competitor's agents simply can't. That knowledge is yours. That's the moat.

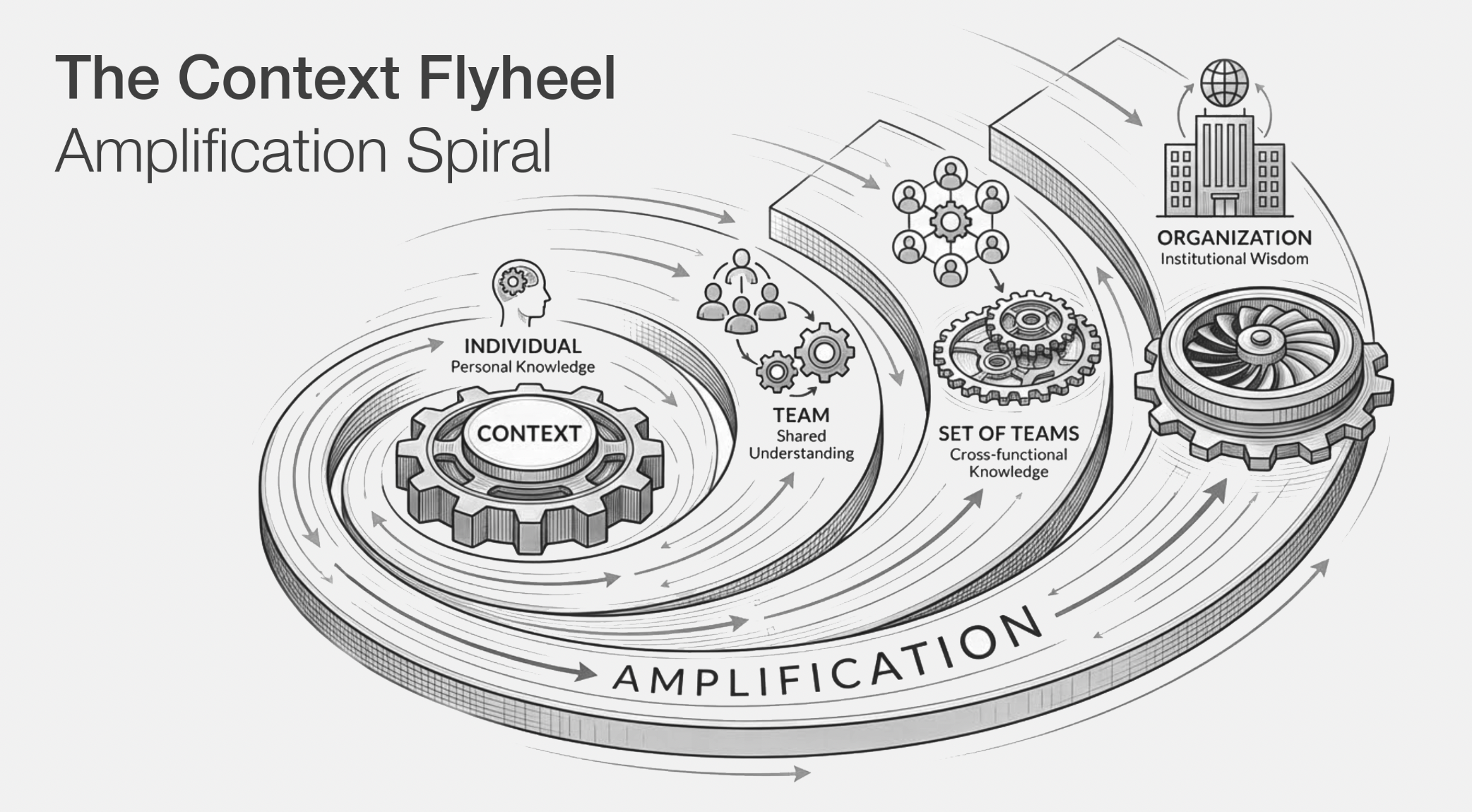

But someone has to own it

The flywheel needs ownership. Today, it's usually the senior engineer who cares enough to write a rules file. That doesn't scale. Whether it's a developer experience team, a platform team, embedded context engineers in squads, or a dedicated role matters less than the principle: if nobody owns it, it rots. We learned this with docs, with infrastructure, with security. We're about to learn it again with context.

Ownership means three things:

Maintenance. Reviewing context for staleness, resolving conflicts, retiring what no longer applies. Not as a quarterly cleanup, but as part of the cycle.

Enablement. Making it easy for people to contribute: example context for common agent tasks, CLI tooling, evals that run in CI, so writing and testing context fits into the same workflow as writing and testing code.

Governance. Making sure what gets shared meets a quality bar. Evals are the backbone here: context that doesn't pass its evaluations doesn't get distributed. Add conflict detection, deprecation policies, and review processes, and you have a system that scales without losing coherence.

The question

You've invested in getting agents to write code. You've invested in the models, the tools, the infrastructure.

What have you invested in the context that makes all of it work?

The teams that compound that context, cycle after cycle, will be the ones that pull ahead. Not because they have better AI. Because they have better context. And context, unlike models, doesn't commoditise.

That's the flywheel.