23 Mar 20266 minute read

With Composer 2, Cursor targets longer coding tasks with lower pricing

23 Mar 20266 minute read

AI coding tools can produce code by the bucketful, but they still struggle when a given task spreads across multiple files and requires repeated tool calls and course corrections. That’s the precise problem that Cursor is targeting with Composer 2, an updated version of its coding model that the company says performs better on longer tasks at a lower cost.

Cursor, the AI code editor built by startup Anysphere, first introduced Composer back in October, serving as an agent model trained for software engineering inside large codebases. At launch, the company described it as a mixture-of-experts (MoE) language model trained with reinforcement learning, with access to tools such as file editing, terminal commands, grep, and codebase-wide semantic search.

Cursor also said Composer was tuned for fast interactive use, claiming generation speed four times faster than similar models on its own benchmarks.

The latest incarnation keeps that same basic direction, but with an emphasis on benchmark gains, longer coding tasks, and lower token pricing.

Benchmarking Composer 2

Composer, in its initial guise, was pitched as a fast coding agent that could use tools inside Cursor’s editor to complete tasks. The company said it was trained on real-world software engineering challenges and evaluated on “CursorBench,” an internal benchmark based on requests from its own engineers and researchers.

Cursor says the newer model can handle coding jobs requiring hundreds of actions, and it published higher scores for Composer 2 than Composer 1 and 1.5 on CursorBench, Terminal-Bench 2.0, and SWE-bench Multilingual. In the company’s numbers, Composer 2 reached 61.3 on CursorBench, compared with 38.0 for Composer 1.

Cursor also says the model is faster and cheaper in its own comparisons, with a “fast” Composer 2 variant shown delivering higher token throughput than competing models while running at a lower price per output token.

Pricing, in particular, is a core part of the pitch. Cursor lists Composer 2 at $0.50 per million input tokens and $2.50 per million output tokens, alongside the faster variant priced at $1.50 and $7.50 respectively. By comparison, the original Composer was priced at $1.25 per million input tokens and $10.00 per million output tokens – significantly more than Composer 2.

The big debate: Who owns the underlying model?

AI coding tools like Cursor and Windsurf were built atop models from companies such as OpenAI, Anthropic, and Google, wrapping that underlying intelligence in developer-friendly editors. While that setup helped them move quickly, it also left them dependent on a small group of model providers for performance, pricing, and access.

Now, some of these platforms are starting to build more of that intelligence themselves. Composer 2 is representative of that shift, as Cursor pushes its coding agent toward longer, multi-step tasks while taking greater control over how the model is trained and deployed.

Developers are paying close attention to the underlying models, too. In one particularly fervent Hacker News thread, users speculated about whether Composer 2 was built on top of Kimi K2.5, an open model developed by Chinese AI firm Moonshot AI, with others questioning whether Cursor had properly disclosed its use.

Others, however, pushed back on that assessment.

“‘Just’ Kimi K2.5 with RL (reinforcement learning) — people really misunderstand how difficult it is to achieve these results with RL,” one commenter wrote. “Cursor’s research team is highly respected within the industry, and what they’ve done is quite impressive. Before people go jumping to conclusions about model theft, it's worth considering the possibility that they did reach an agreement with Moonshot which their researchers were not aware of.”

Cursor has since brought a little more clarity to the mix. In a series of posts on X, Lee Robinson, VP of developer education at Cursor, said that Composer 2 is indeed built on top of Kimi K2.5. He added that roughly a quarter of the compute in the final model comes from the base, with the remainder coming from Cursor’s continued pretraining and reinforcement learning.

Moreover, Moonshot later confirmed the arrangement publicly, writing that it was “proud to see Kimi-k2.5 provide the foundation,” describing Cursor’s use of the model as part of an “authorized commercial partnership” via Fireworks AI.

In short, Composer 2 sits somewhere between a wrapper on an external model and a system trained entirely in-house: it runs on top of an external base model, with most of the work coming from additional training and tooling layered on afterwards.

And therein lies one of the advantages of open models: they give companies like Cursor a strong foundation to compete more effectively with the major AI labs.

Resources

Related Articles

Terminal-Bench: Benchmarking AI Agents on CLI Tasks

23 Jul 2025

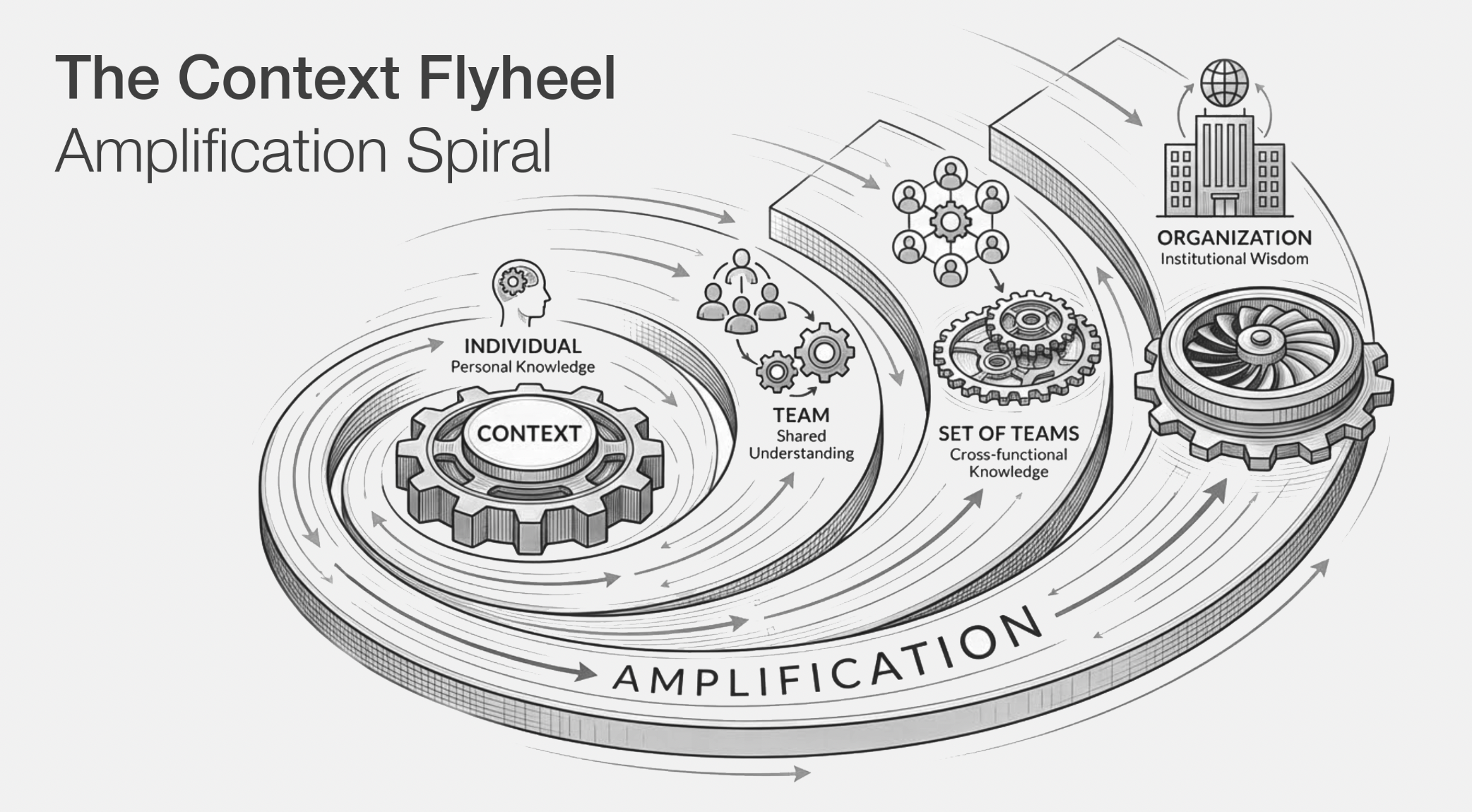

The Context Flywheel: Why the Best AI Coding Teams Will Win on Context

26 Feb 2026

Wrapper’s delight: Cursor and Windsurf’s shift to home-grown AI marks a push for independence

14 Nov 2025

Cursor turns its internal security agents into reusable templates

18 Mar 2026